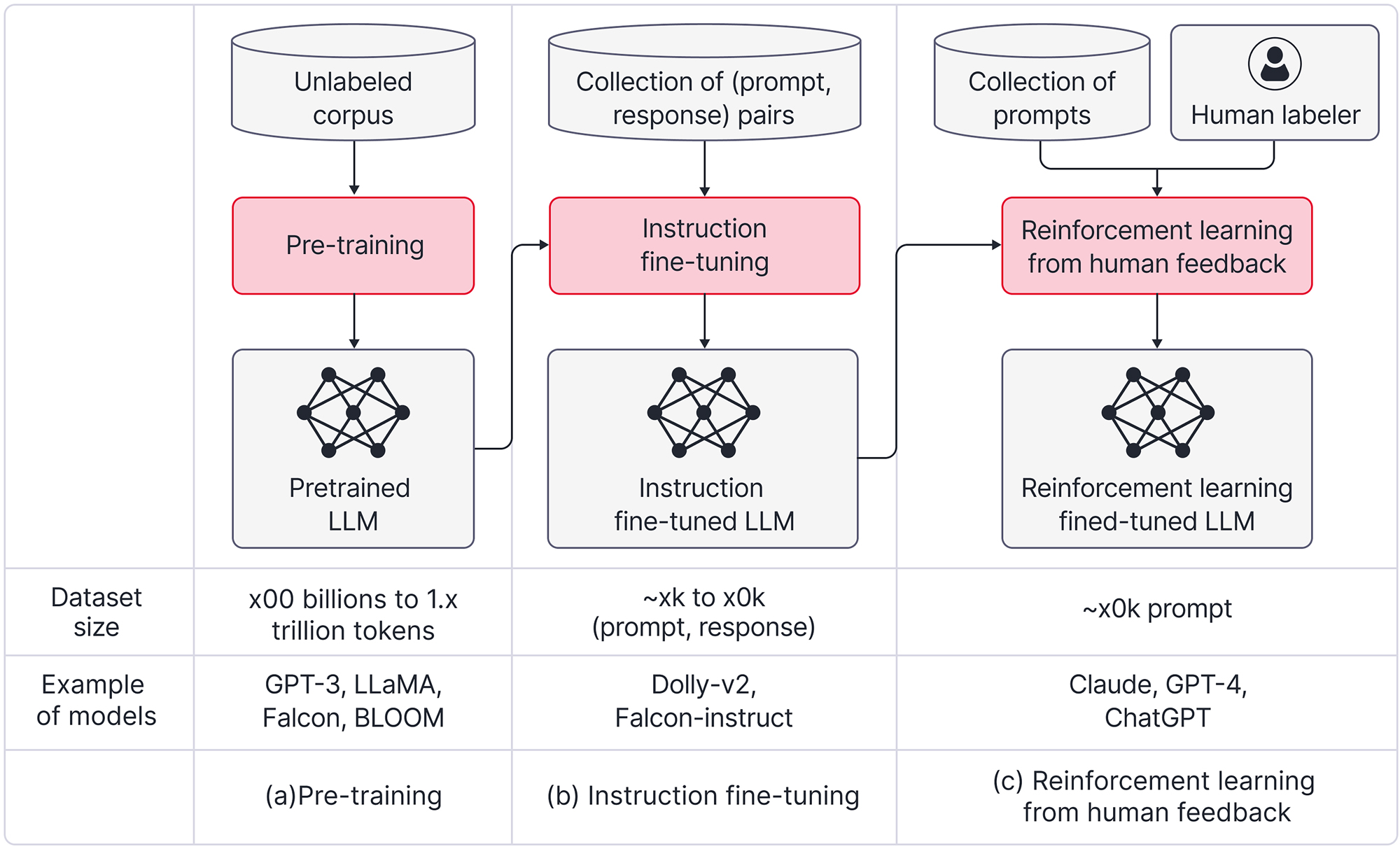

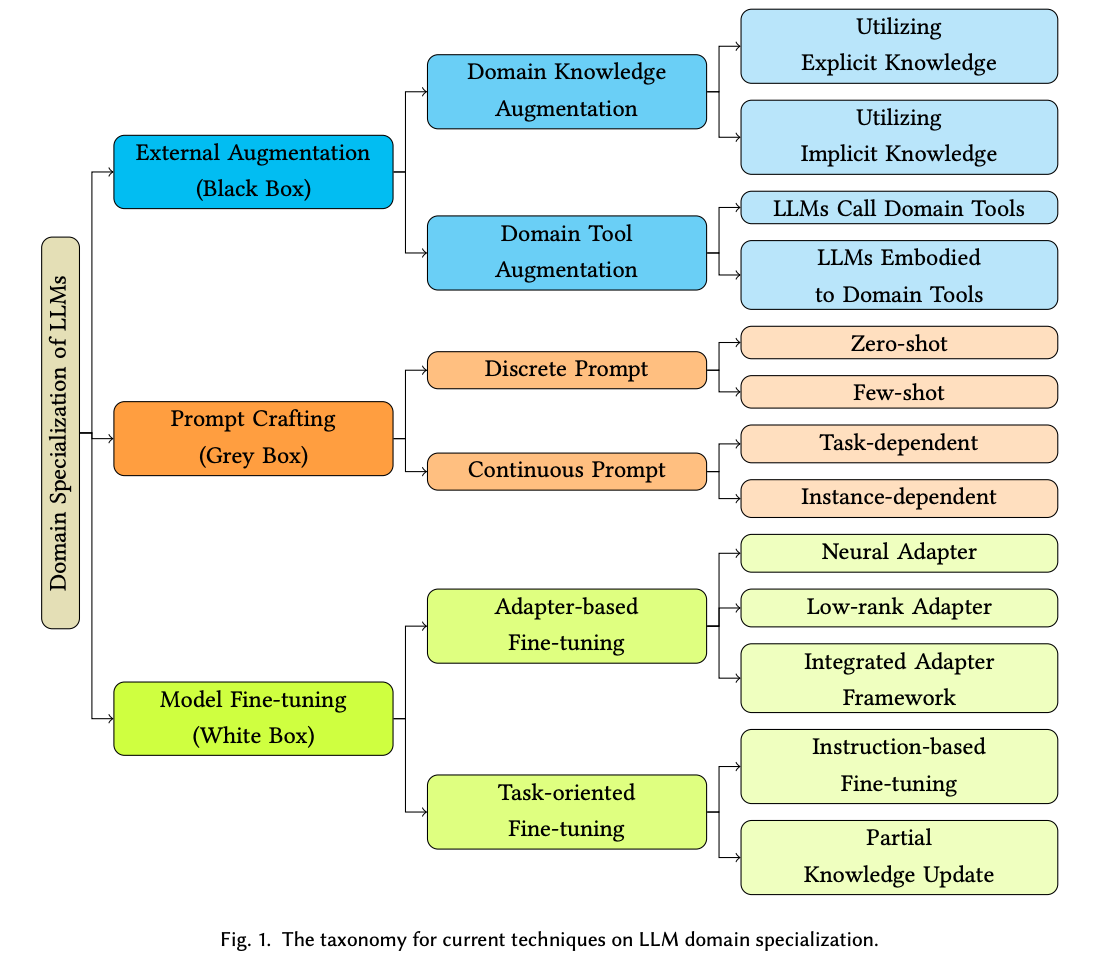

Fine-tuning LLMs can help building custom, task specific and expert models. Read this blog to know methods, steps and process to perform fine tuning using RLHF

In discussions about why ChatGPT has captured our fascination, two common themes emerge:

1. Scale: Increasing data and computational resources.

2. User Experience (UX): Transitioning from prompt-based interactions to more natural chat interfaces.

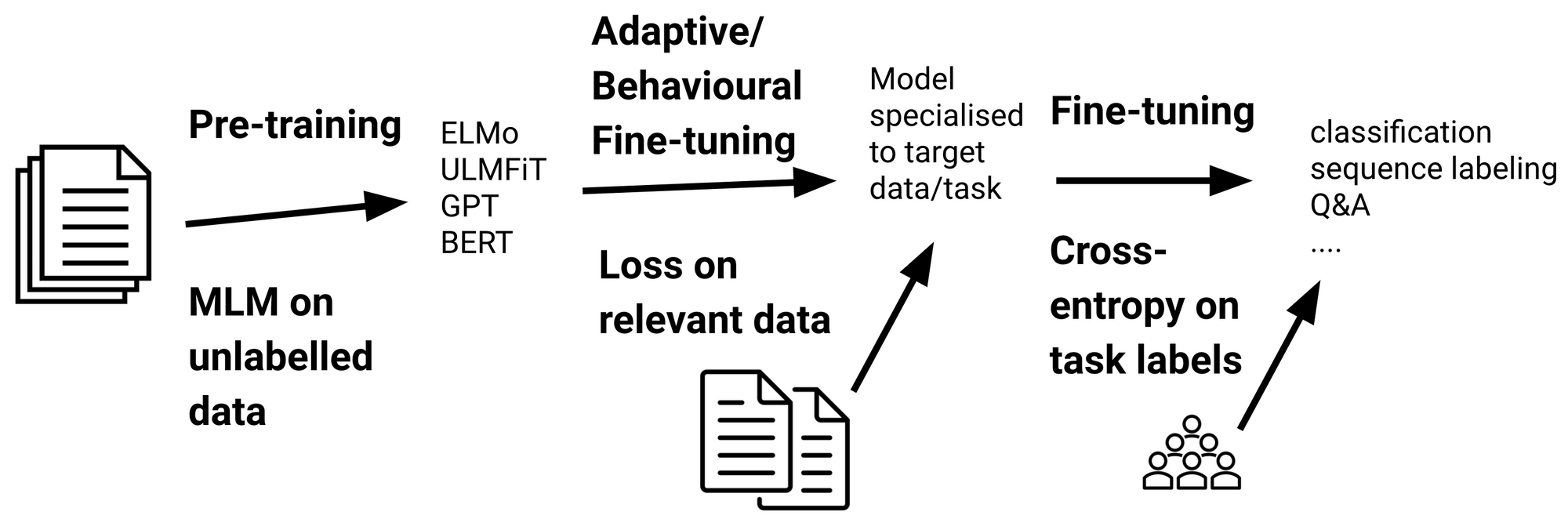

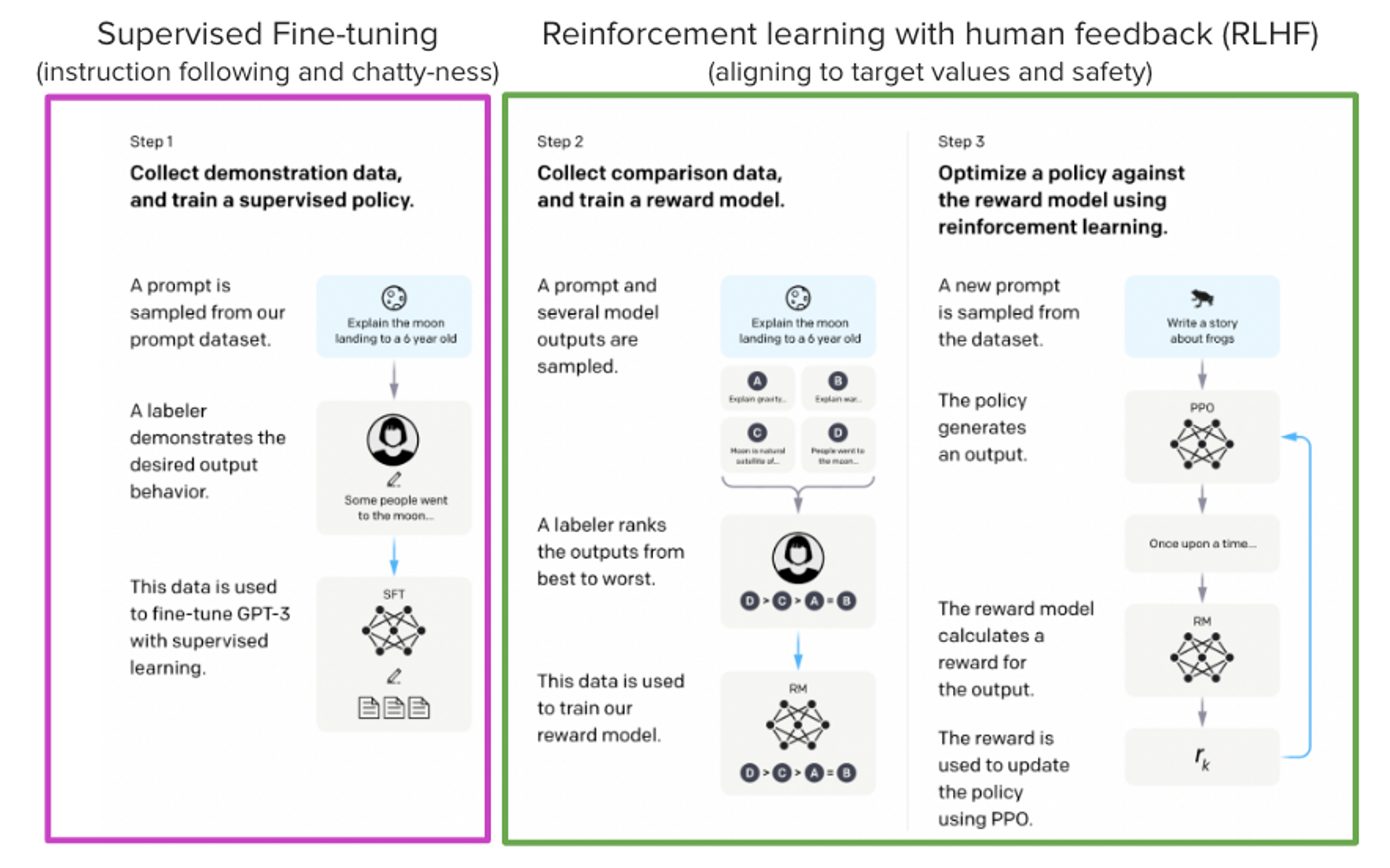

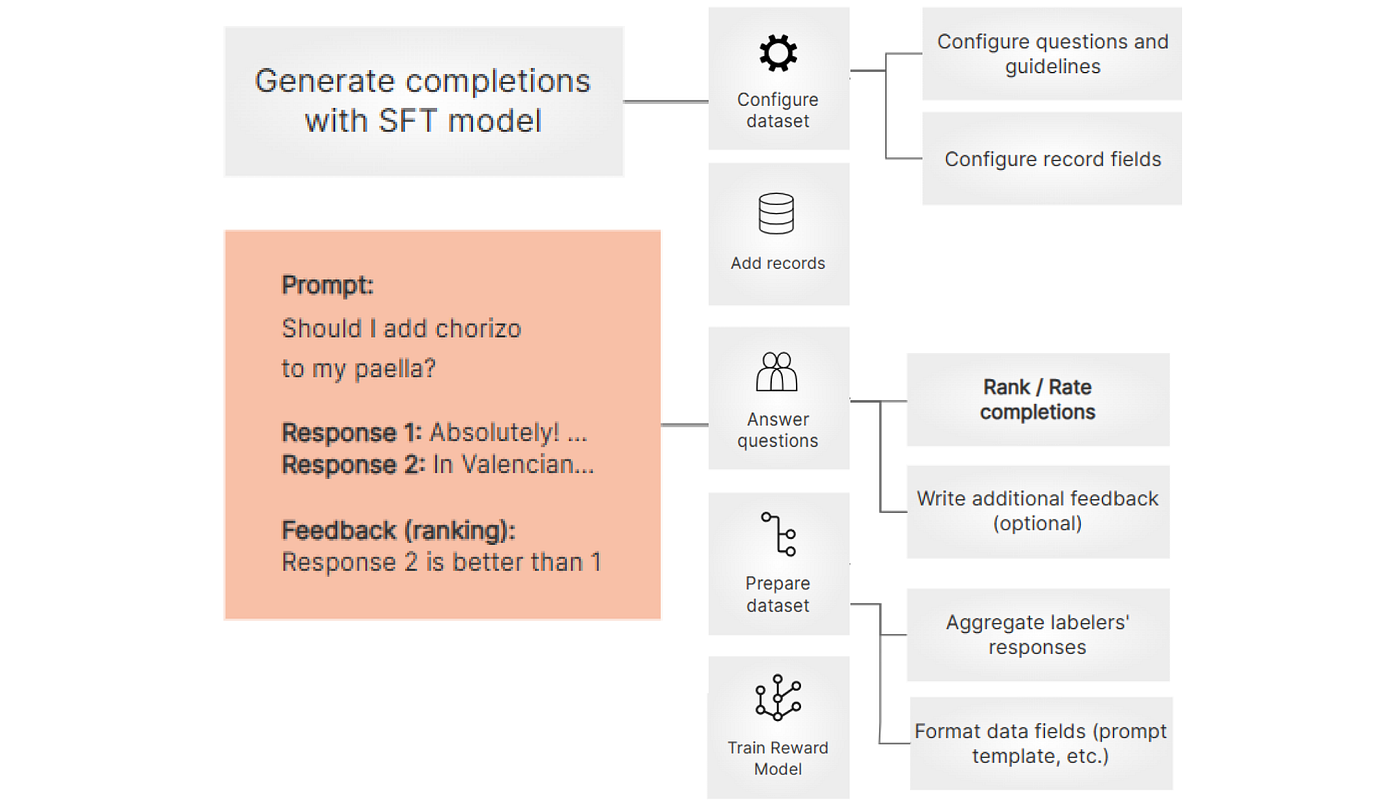

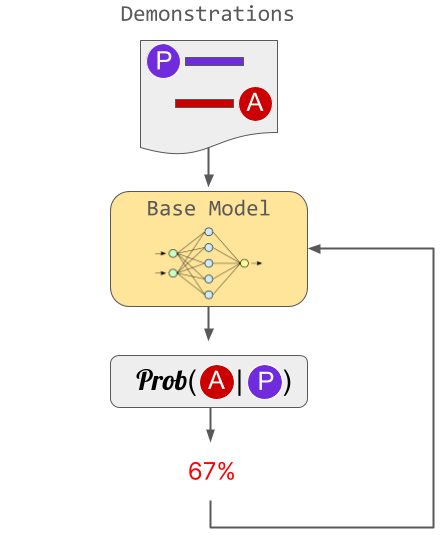

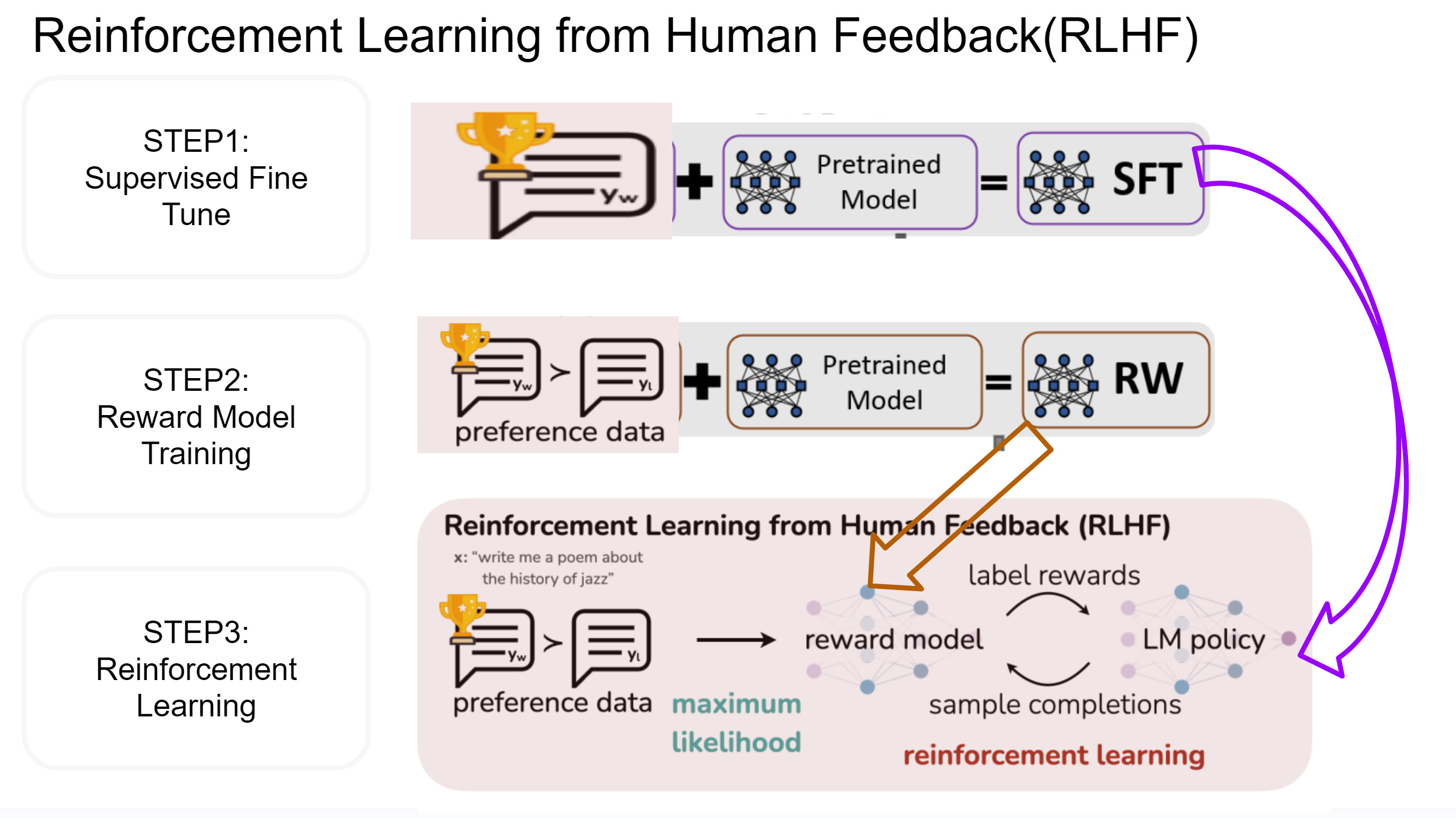

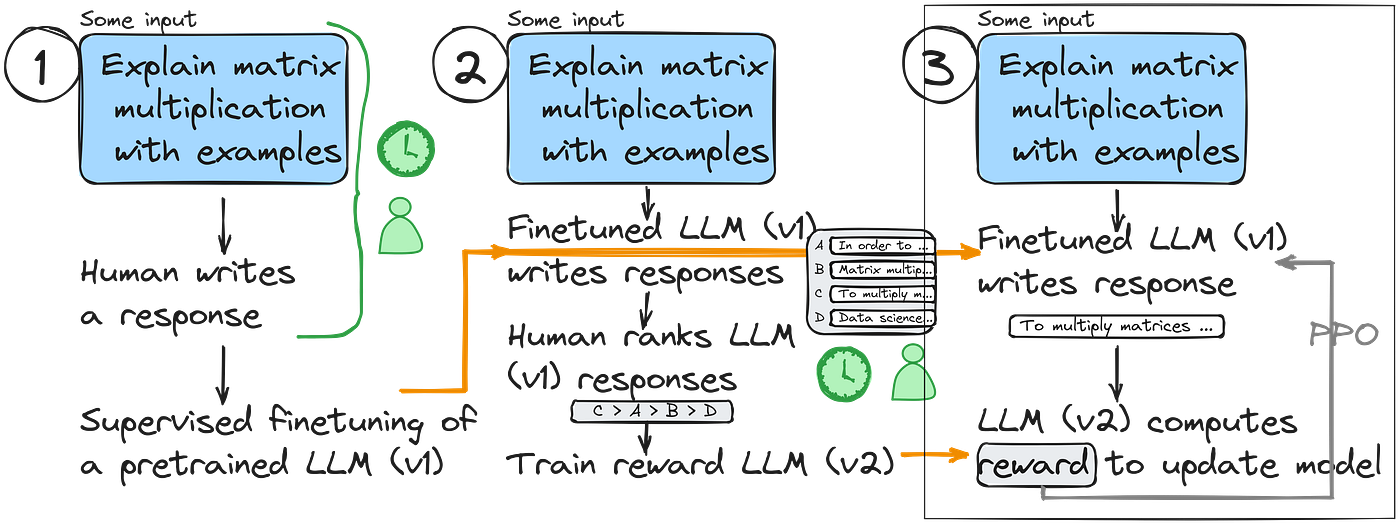

However, there's an aspect often overlooked – the remarkable technical innovation behind the success of models like ChatGPT. One particularly ingenious concept is Reinforcement Learning from Human Feedback (RLHF), which combines reinforcement learni

StackLLaMA: A hands-on guide to train LLaMA with RLHF

The complete guide to LLM fine-tuning - TechTalks

Fine tuning Large Language Models (using Instruction Tuning and RLHF)

Building a Reward Model for Your LLM Using RLHF in Python, by Fareed Khan

The Full Story of Large Language Models and RLHF

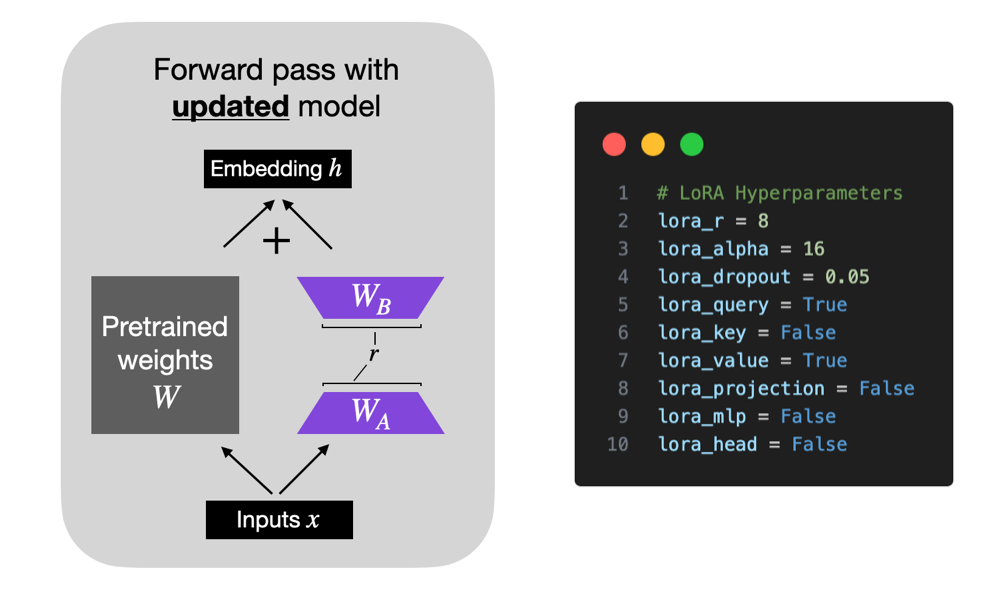

Finetuning LLMs with LoRA and QLoRA: Insights from Hundreds of Experiments - Lightning AI

A High-level Overview of Large Language Models - Borealis AI

.png)

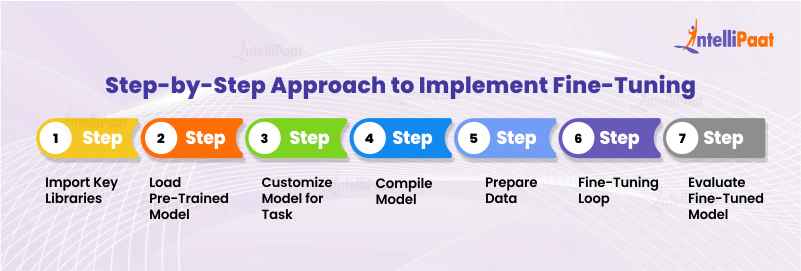

A Comprehensive Guide to fine-tuning LLMs using RLHF (Part-1)

The complete guide to LLM fine-tuning - TechTalks

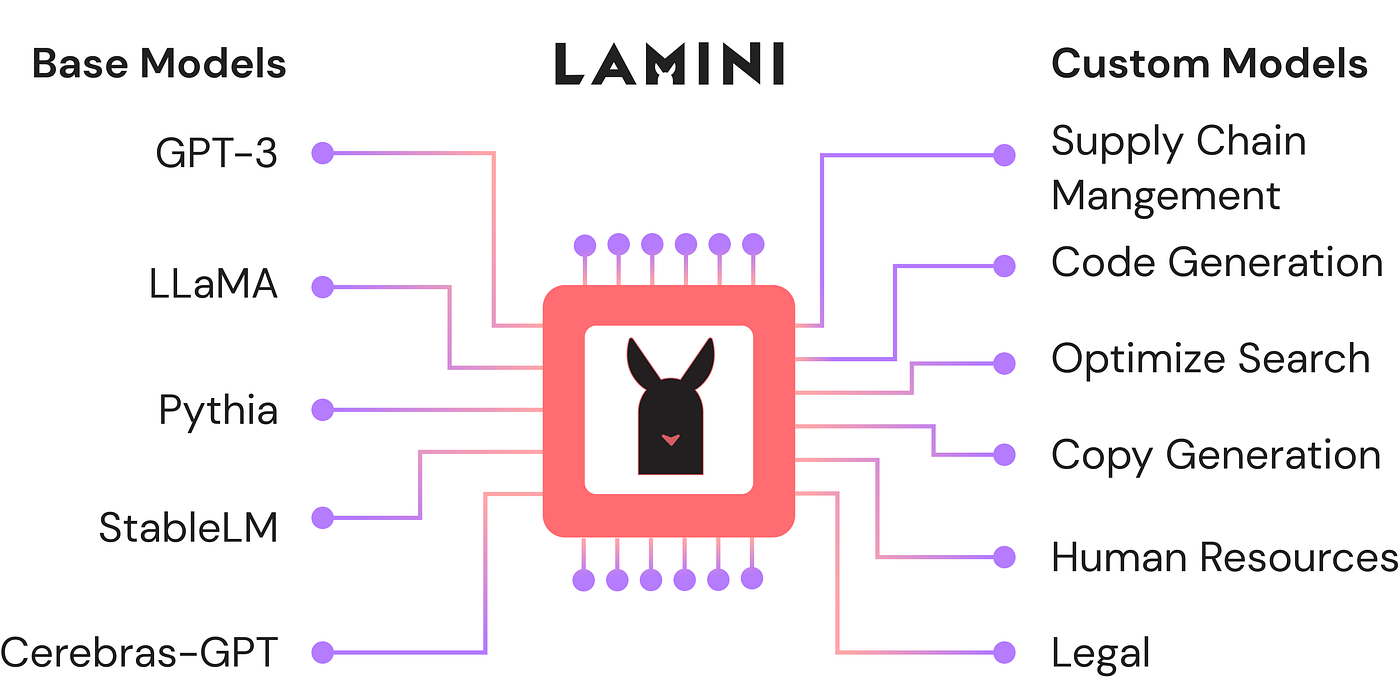

Inside Lamini: A New Framework for Fine-Tuning LLMs, by Jesus Rodriguez

Fine Tuning LLMs - learnings from the DeepLearning SF Meetup

.png)

A Comprehensive Guide to fine-tuning LLMs using RLHF (Part-1)

Fine-Tuning LLMs with Direct Preference Optimization

LangChain 101: Part 2d. Fine-tuning LLMs with Human Feedback, by Ivan Reznikov