A comprehensive guide to retrieval-augmented generation (RAG), fine-tuning, and their combined strategies in Large Language Models (LLMs).

Blog LLM Ops - Galileo

LLM Fine-Tuning: What Works and What Doesn't?

Errol Brandt on LinkedIn: Visualize your RAG Data — EDA for Retrieval-Augmented Generation

Jerry Yang, Ph. D. on LinkedIn: Microsoft Certified: Azure Solutions Architect Expert was issued by…

Optimizing LLMs: Best Practices (Prompt Engineering, RAG and Fine

RAG VS FINE-TUNING. There are two common ways in which…

RAG Vs Fine-Tuning

Sowmya Vivek on LinkedIn: RAG - Encoder and Reranker evaluation

WSaaS on LinkedIn: Quant research at scale using AWS and Refinitiv data

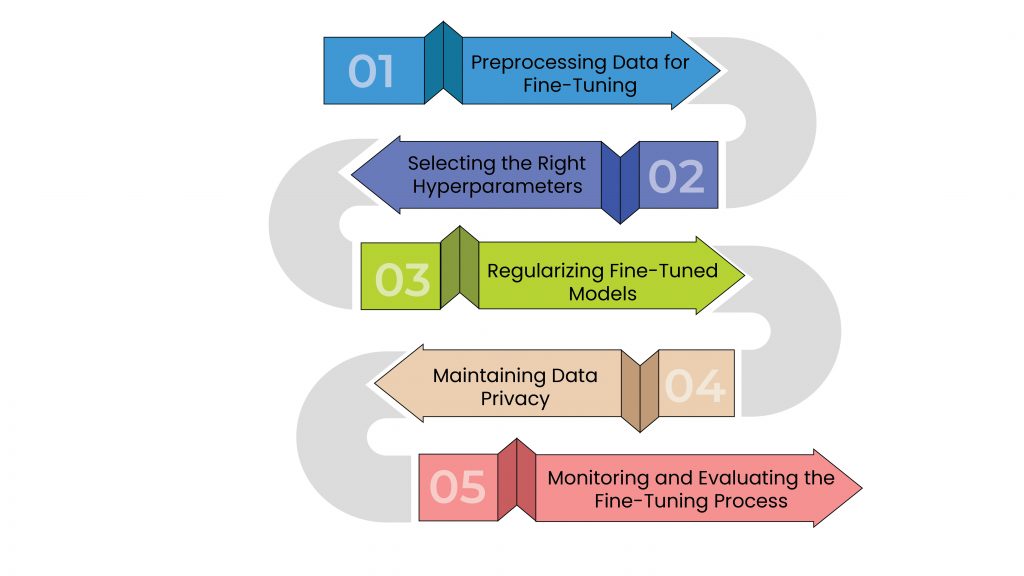

Fine-tuning Large Language Models: Complete Optimization Guide

Shikhill Gupta on LinkedIn: System Design Interviews by Shikhil Kumar Gupta

ABX IQ on LinkedIn: Enforce fine-grained access control on Open Table Formats via EMR…

:max_bytes(150000):strip_icc():focal(711x184:713x186)/taylor-momsen-090623-1-2c469df8a4e2457aa669b95011b444f3.jpg)