Introducing MPT-30B, a new, more powerful member of our Foundation Series of open-source models, trained with an 8k context length on NVIDIA H100 Tensor Core GPUs.

Can large language models reason about medical questions? - ScienceDirect

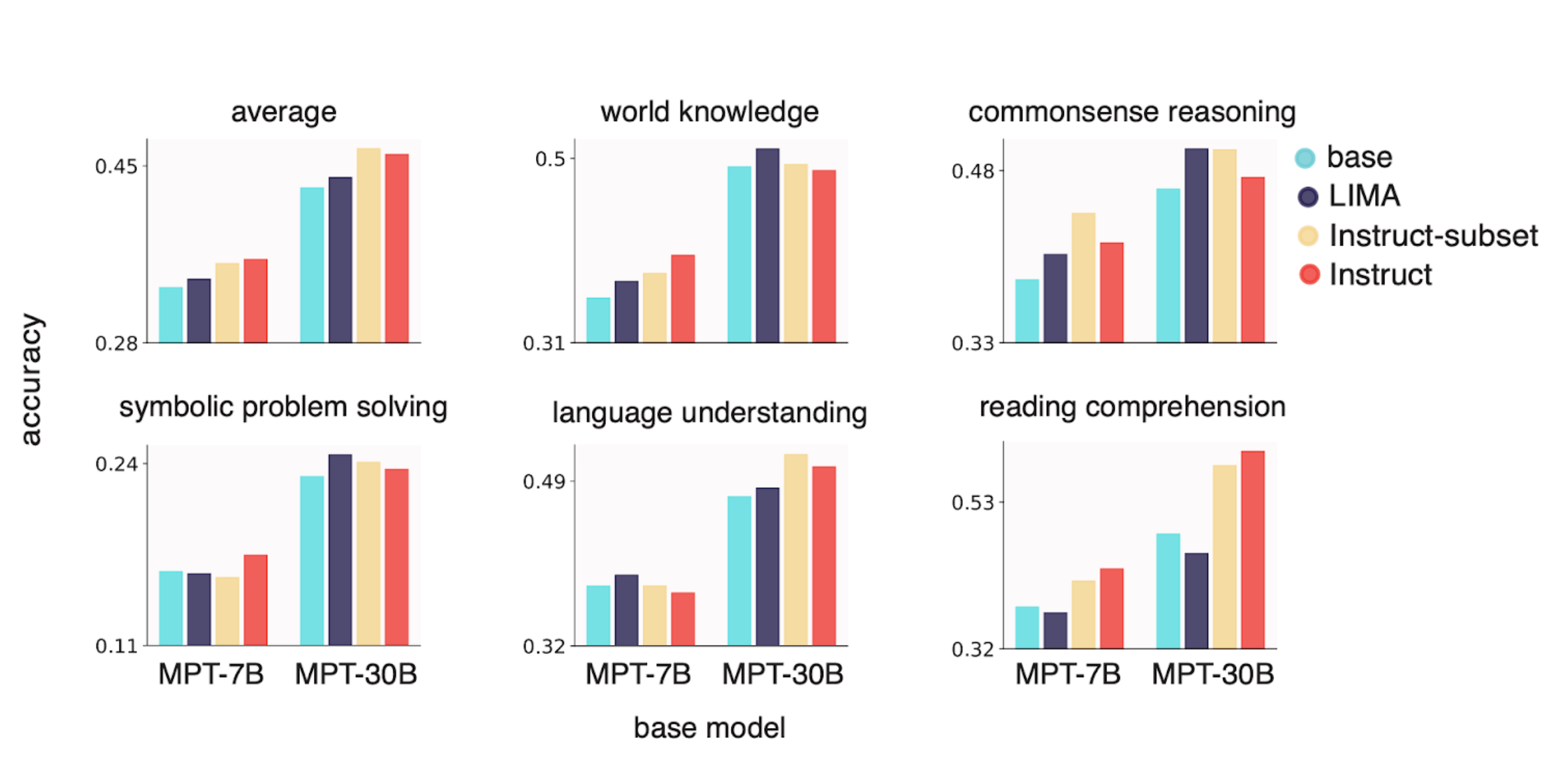

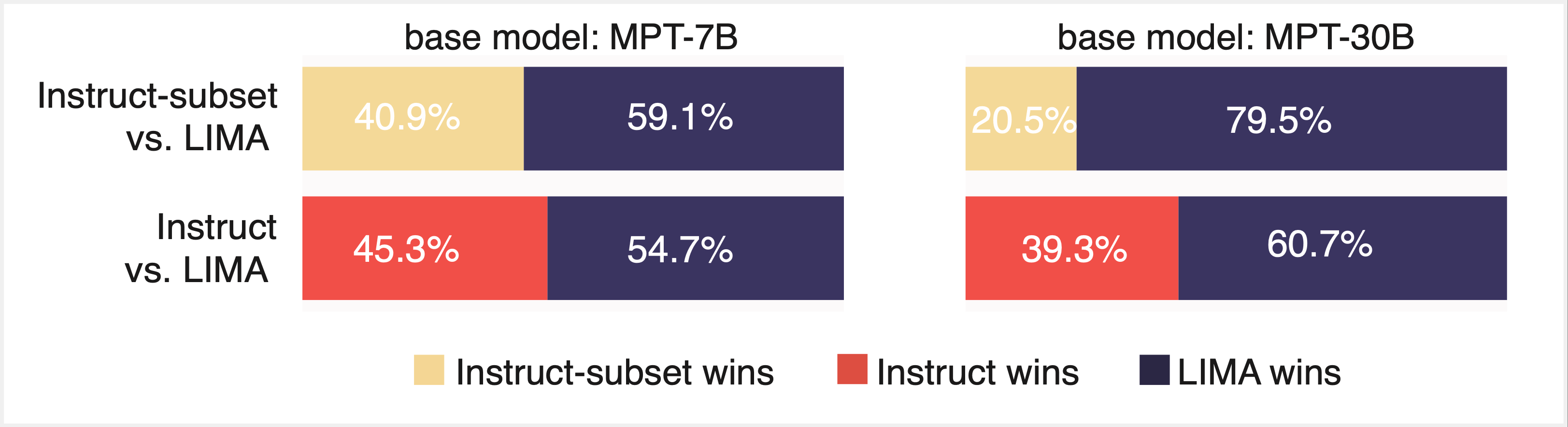

LIMIT: Less Is More for Instruction Tuning

MosaicML's latest models outperform GPT-3 with just 30B parameters

LIMIT: Less Is More for Instruction Tuning

The History of Open-Source LLMs: Better Base Models (Part Two), by Cameron R. Wolfe, Ph.D.

Survival of the Fittest: Compact Generative AI Models Are the Future for Cost-Effective AI at Scale - Intel Community

MosaicML releases open-source 30B parameter AI model for enterprise applications - SiliconANGLE

12 Open Source LLMs to Watch

Train Faster & Cheaper on AWS with MosaicML Composer

MPT-30B's release: first open source commercial API competing with OpenAI, by BoredGeekSociety

Centralized provisioning of large language models for a research community

Timeline of Transformer Models / Large Language Models (AI / ML / LLM)

Survival of the Fittest: Compact Generative AI Models Are the Future for Cost-Effective AI at Scale - Intel Community